AI’s Potential to Transform the Visual Experience for the Blind

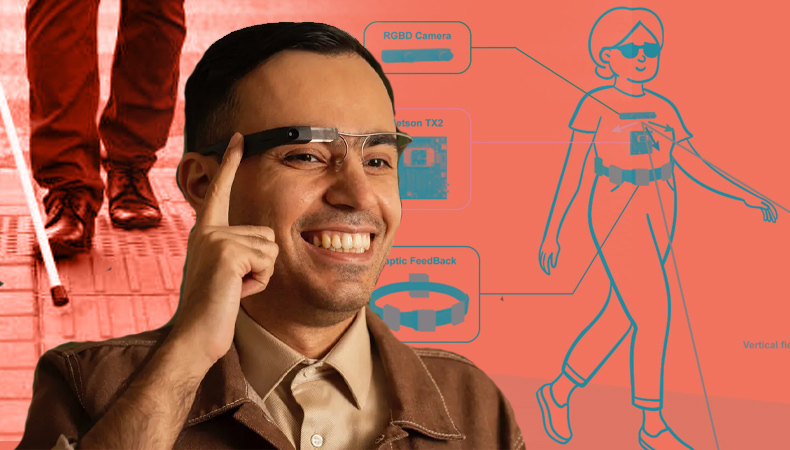

Artificial intelligence (AI) developments have the potential to completely transform the lives of people who are blind or visually impaired by giving them fresh perspectives on how to perceive and engage with their environment. Several assistive technology services incorporate OpenAI’s GPT-4, a multimodal model that integrates text and visual processing skills. These services aim to give visually impaired users thorough descriptions of items and people using AI, giving them more independence and access to visual data.

Also Read – How Artificial Intelligence (AI) Is Changing The World?

Enhanced Descriptions and Independence

Blindness makes it difficult for some people, like Chela Robles, who lost her eyesight in both eyes, to notice subtle elements that promote interpersonal communication, like hints and facial expressions. Substantial advancement is provided by AI-driven assistive technology applications like Ask Envision and Be My Eyes, which include OpenAI’s GPT-4. These services offer a plethora of visual information by describing user-captured photographs and generating conversational responses. Users may now browse menus, find out about prices and dietary restrictions, receive full descriptions of their surroundings, and learn more about their environment.

The Impact of AI Integration

Sina Bahram, a visually impaired computer scientist, underlines the revolutionary effects of incorporating AI into assistive technologies for those with visual impairments. With GPT-4, these products’ capabilities have advanced dramatically, offering previously unheard-of levels of detail and intuitive functionality. Bahram describes how, a year ago, it would have been impossible; he utilised Be My Eyes with GPT-4 to decipher the contents of a collection of stickers. The daily lives and independence of blind people could be significantly improved by incorporating AI into seeing-eye products.

Challenges and Risks

While using AI in assistive technology is an accomplishment, there are still certain difficulties. Computer science assistant professor Danna Gurari knows the drawbacks of utilising extensive language models like GPT-4. These models occasionally produce inaccurate or deceptive information, a condition known as “hallucination.” Blind users who depend on these descriptions may unintentionally base their decisions on false information, which could have dire repercussions.

The biases and mistakes found in the training data of AI models are a further cause for concern. In the past, Western bias in computer vision systems has resulted in less accurate results for several groups, including Asians, transgender people, and those with a dark complexion. These prejudices might be reinforced using defective AI models that incorrectly detect characteristics like age, colour, and gender.

Striking a Balance: Information and Confidence

According to Bahram, those who are blind have the same access to information as those who are not. He advises providing confidence scores along with AI-generated descriptions while highlighting the hazards. Using these scores, users could decide wisely in light of the AI’s evaluation of its accuracy. Bahram stresses the significance of treating those who are blind or visually impaired as equals and not withholding information from them.

Also Read – H1- Retail Spending through Chatbots to Reach $72 Billion by 2028

The use of assistive technology services that use AI, in particular OpenAI’s GPT-4, has the potential to change how blind people perceive the world ultimately. These AI-powered devices increase independence and enable persons who are blind or visually impaired to engage with the world more successfully by giving detailed descriptions of objects and people. However, issues like biased data and erroneous information must be addressed using techniques like confidence scoring. AI presents a viable path forward for enhancing the lives of the blind and visually impaired by enabling them to “see” the world in novel and meaningful ways.